MEDIA ANALYTICS

PROJECTS

PEOPLE

PUBLICATIONS

PATENTS

Exploiting Unlabeled Data with Vision and Language Models for Object Detection

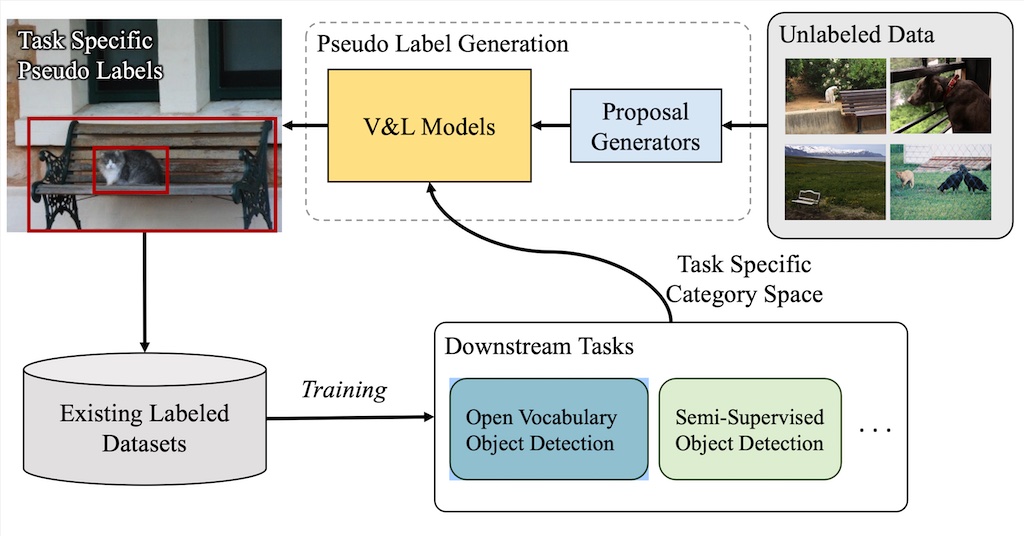

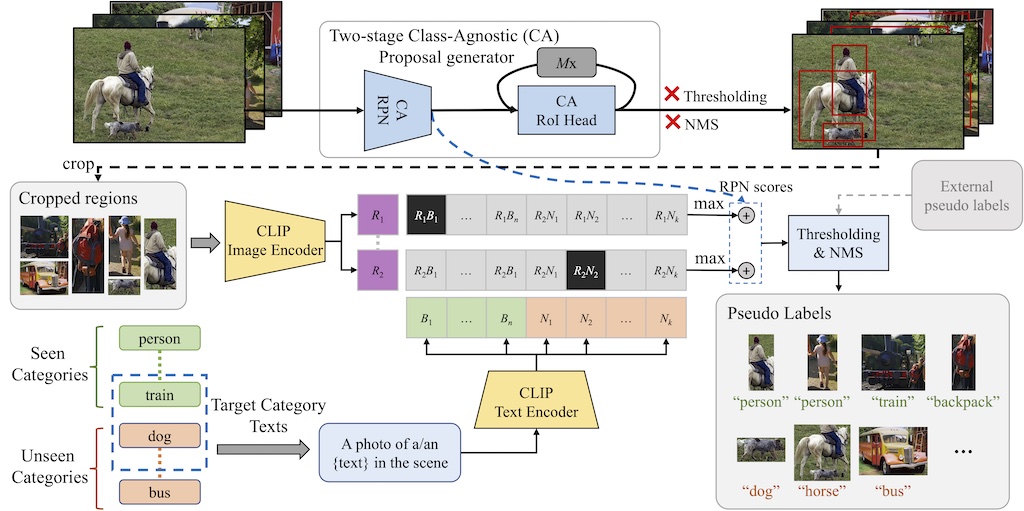

We propose a simple but effective way to mine unlabeled images using recently proposed vision and language (V\&L) models to generate pseudo labels for both known and novel categories, which suits both tasks, SSOD and OVD. The contributions of our work are as follows: 1) We leverage V&L models for improving object detection frameworks by generating pseudo labels on unlabeled data. 2) A simple but effective strategy to improve the localization quality of pseudo labels scored with the V\&L model CLIP. 3) State-of-the-art results for novel categories on the COCO open-vocabulary detection setting. 4) We showcase the benefits of VL-PLM in a semi-supervised object detection setting.

Collaborators: Shiyu Zhao, Zhixing Zhang, Long Zhao, Vijay Kumar B.G., Anastasis Stathopoulos, Manmohan Chandraker, Dimitris Metaxas

Exploiting Unlabeled Data with Vision and Language Models for Object Detection Paper

Abstract

Overview

Main Results

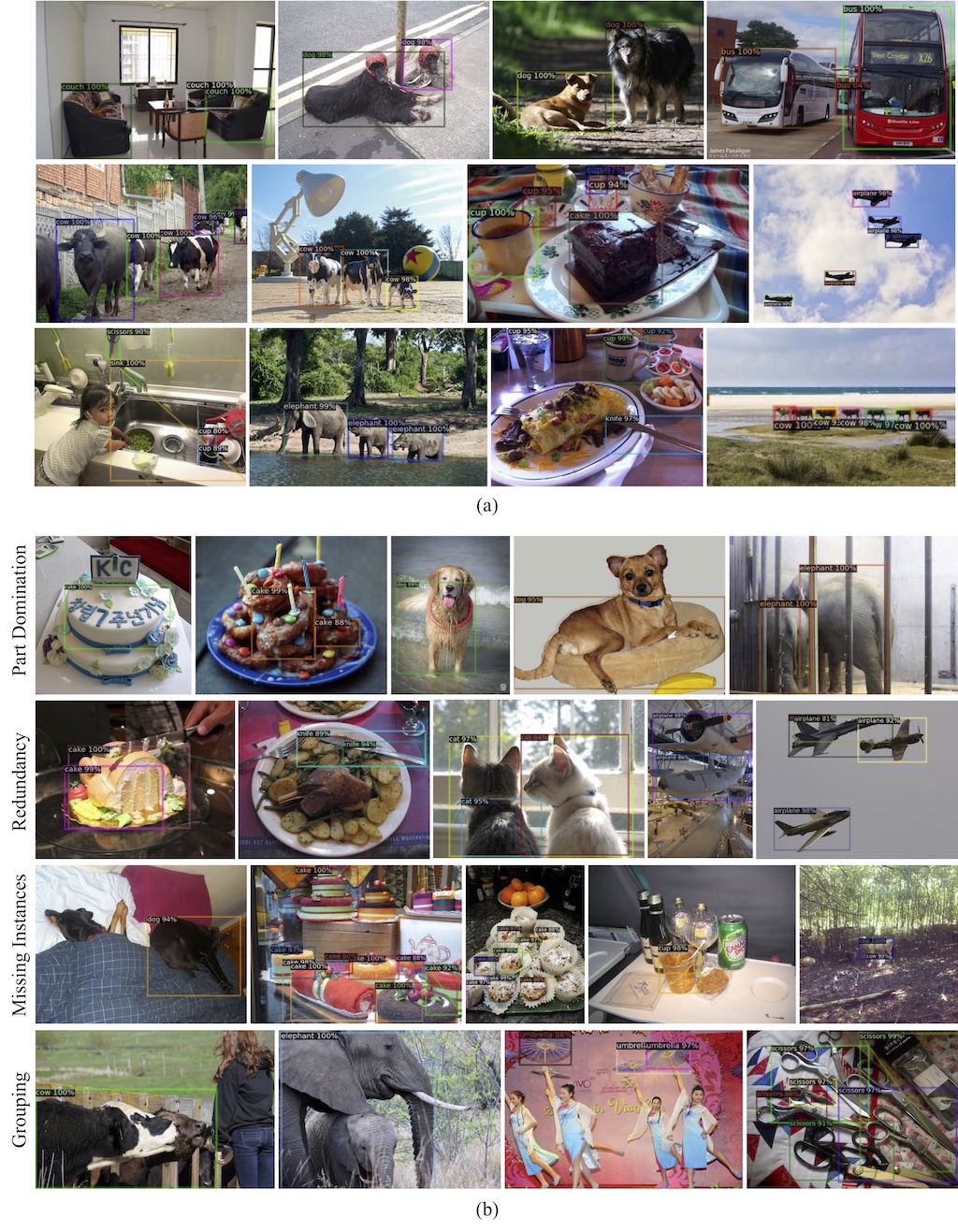

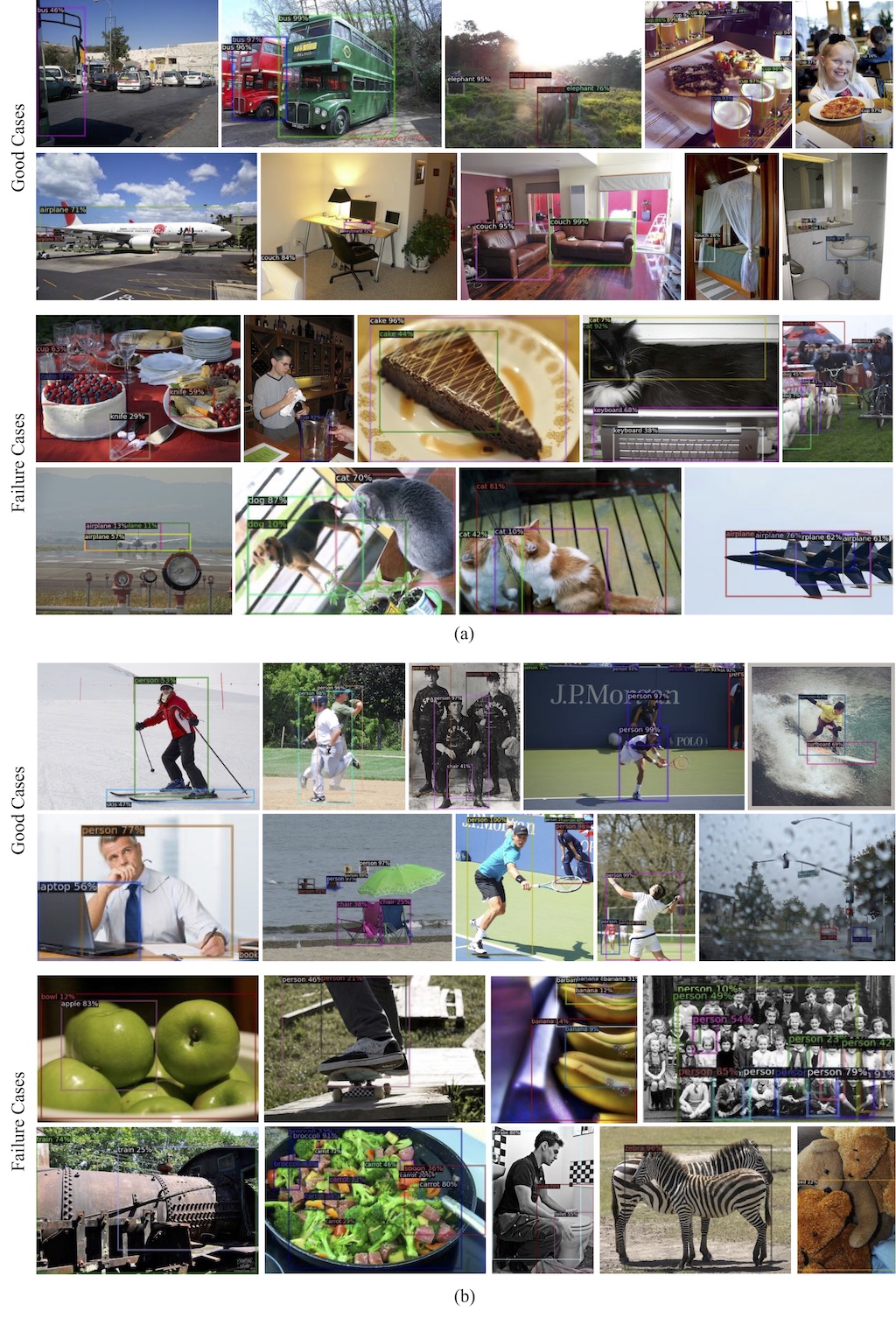

Visualizations of the pseudo labels (PLs) from VL-PLM. Only boxes for target categories in the scene are shown. (a) Good cases. All target objects are located with appropriate boxes. (b) The most common types of failure cases in our PLs, i.e., part domination, redundant boxes, missing instances, and grouped instances.

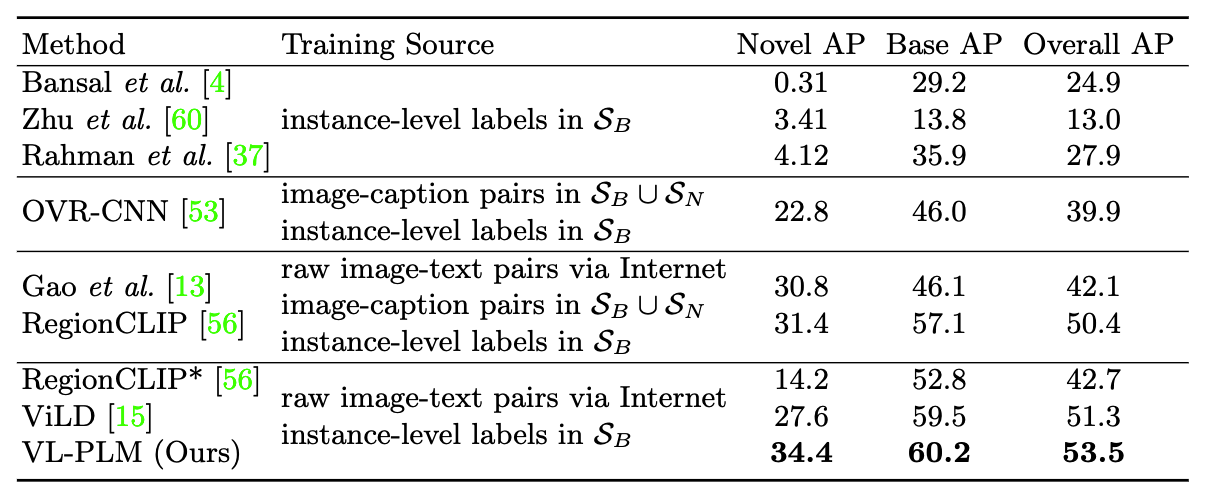

Quantitative evaluation of our VL-PLM approach on open vocabulary object detection task on COCO 2017 dataset.

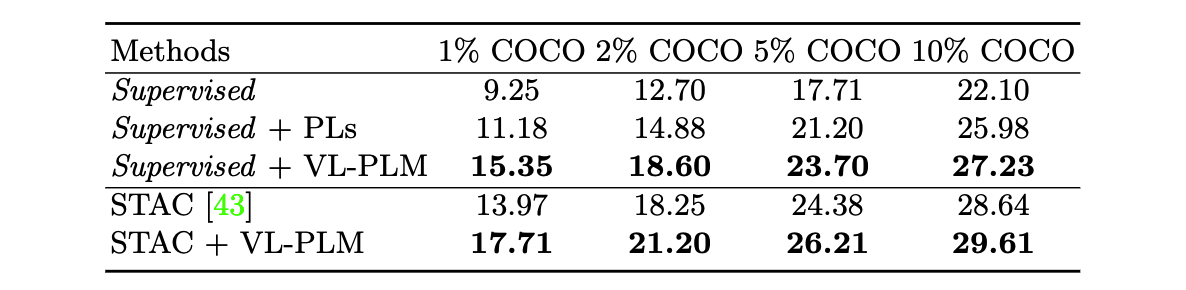

Quantitative evaluation of our VL-PLM approach on semi-supervised object detection task on COCO 2017 dataset.

Visualization of the final detection results. Only boxes for target categories in the scene are shown. (a) Novel categories as the target. (b) Base categories as the target. The major failure cases belong to three types, i.e., missing instances, redundant boxes, or grouped instances

Acknowledgements

All images were taken from the public MS COCO dataset. Please see the dataset for more information on the individual image sources. This webpage template is inspired by Colorful Image Colorization