Material Links

MEDIA ANALYTICS

PROJECTS

PEOPLE

PUBLICATIONS

PATENTS

Unsupervised & Semi-Supervised Domain Adaptation for Action Recognition From Drones

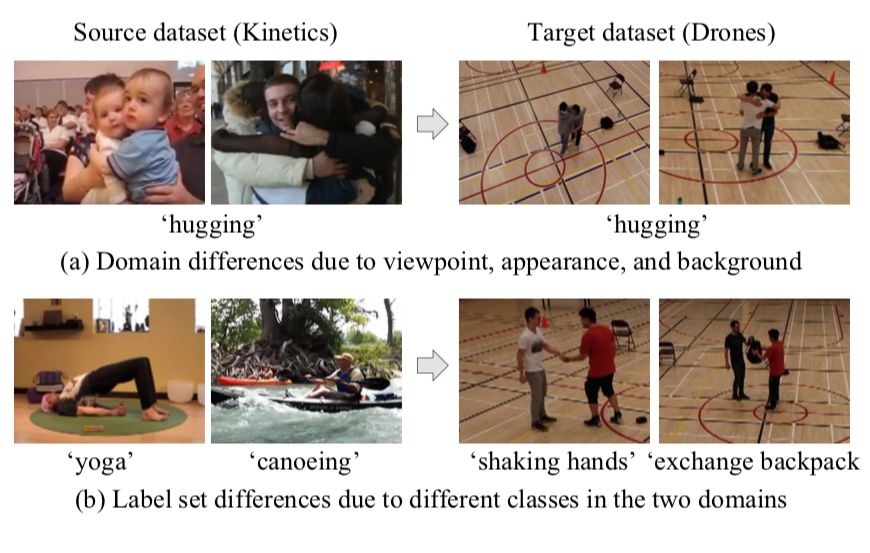

WACV 2020 | We address the problem of human action classification in drone videos. Due to the high cost of capturing and labeling large-scale drone videos with diverse actions, we present unsupervised and semi-supervised domain adaptation approaches that leverage both the existing, fully-annotated action-recognition datasets and unannotated (or only a few annotated) videos from drones. To study the emerging problem of drone-based action recognition, we create a new dataset, NEC-DRONE, containing 5,250 videos to evaluate the task. We tackle both problem settings with same and different action label sets for the source (e.g. Kinetics dataset) and target domains (drone videos).

Collaborators: Jinwoo Choi, Gaurav Sharma, Manmohan Chandraker, Jia-Bin Huang

Project Site & Data Set

We address the problem of human action classification in drone videos. Due to the high cost of capturing and labeling large-scale drone videos with diverse actions, we present unsupervised and semi-supervised domain adaptation approaches that leverage both the existing fully annotated action recognition datasets and unannotated (or only a few annotated) videos from drones. To study the emerging problem of drone-based action recognition, we create a new dataset, NEC-Drone, containing 5,250 videos to evaluate the task. We tackle both problem settings with 1) same and 2) different action label sets for the source (e.g., Kinectics dataset) and target domains (drone videos). We present a combination of video and instance-based adaptation methods, paired with either a classifier or an embedding-based framework to transfer the knowledge from source to target. Our results show that the proposed adaptation approach substantially improves the performance on these challenging and practical tasks.

Unsupervised and Semi-Supervised Domain Adaptation for Action Recognition from Drones

In IEEE Winter Conference on Applications of Computer Vision (WACV) 2020

We address the problem of human action classification in drone videos. Due to the high cost of capturing and labeling large-scale drone videos with diverse actions, we present unsupervised and semi-supervised domain adaptation approaches that leverage both the existing fully annotated action recognition datasets and unannotated (or only a few annotated) videos from drones. To study the emerging problem of drone-based action recognition, we create a new dataset, NEC-Drone, containing 5,250 videos to evaluate the task. We tackle both problem settings with 1) same and 2) different action label sets for the source (e.g., Kinectics dataset) and target domains (drone videos). We present a combination of video and instance-based adaptation methods, paired with either a classifier or an embedding-based framework to transfer the knowledge from source to target. Our results show that the proposed adaptation approach substantially improves the performance on these challenging and practical tasks.

Dataset

The zip archive for NEC-Drone dataset for action recognition from drones contains:

- A readme.txt file

- 2,079 annotated videos (frames) and their annotations.

- 3,161 unannotated videos (frames).

To use the dataset you can download the zip archive. The archive is password protected. Kindly also download and send us the signed license agreement to ma-code-request-nec-drone@nec-labs.com, and we will send you the password to the zip archive. Please give a few business days time for processing.