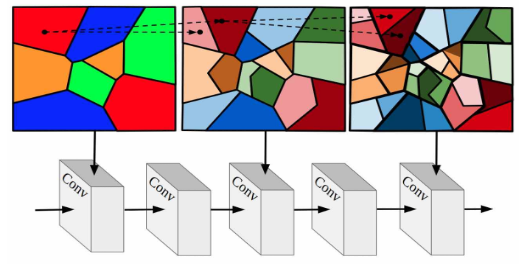

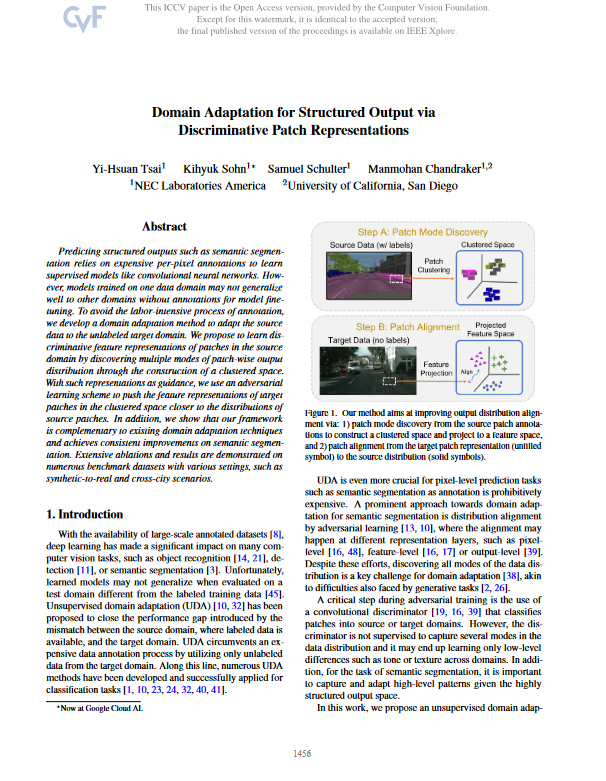

Our method aims at improving output distribution alignment via: 1) patch mode discovery from the source patch annotations to construct a clustered space and project to a feature space, and 2) patch alignment from the target patch representation (unfilled symbol) to the source distribution (solid symbols). Predicting structured outputs such as semantic segmentation relies on expensive per-pixel annotations to learn supervised models like convolutional neural networks. However, models trained on one data domain may not generalize well to other domains unequipped with annotations for model fine-tuning. To avoid the labor-intensive process of annotation, we develop a domain adaptation method to adapt the source data to the unlabeled target domain. To this end, we propose to learn discriminative feature representations of patches in the source domain via discovering multiple patch modes through the construction of a clustered space. With such representations as guidance, we then use an adversarial learning scheme to push the feature representations in target patches to the closer distributions in source ones. In addition, we show that our framework is complementary to existing domain adaptation techniques and achieves consistent improvements on semantic segmentation. Extensive ablation studies and experiments are conducted on numerous benchmark datasets with various settings, such as synthetic-to-real and cross-city scenarios.