Learning to Adapt Structured Output Space for Semantic Segmentation

Publication Date: 6/18/2018

Publication Date: 6/18/2018

Event: Conference on Computer Vision and Pattern Recognition (CVPR) 2018, Salt Lake City, UT USA

Reference: pp 7472-7481

Authors: Yi-Hsuan Tsai, University of California, Merced, NEC Laboratories America, Inc.; Wei-Chih Hung, University of California, Merced; Samuel Schulter, NEC Laboratories America, Inc.; Kihyuk Sohn, NEC Laboratories America, Inc.; Ming-Hsuan Yang, University of California, Merced, NEC Laboratories America, Inc.; Manmohan Chandraker, NEC Laboratories America, Inc.

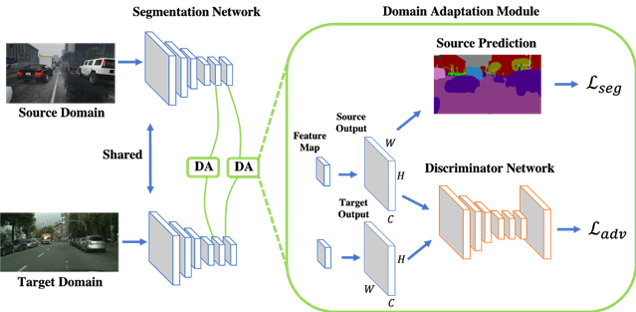

Abstract: Convolutional neural network-based approaches for semantic segmentation rely on supervision with pixel-level ground truth, but may not generalize well to unseen image domains. As the labeling process is tedious and labor intensive, developing algorithms that can adapt source ground truth labels to the target domain is of great interest. In this paper, we propose an adversarial learning method for domain adaptation in the context of semantic segmentation. Considering semantic segmentations as structured outputs that contain spatial similarities between the source and target domains, we adopt adversarial learning in the output space. To further enhance the adapted model, we construct a multi-level adversarial network to effectively perform output space domain adaptation at different feature levels. To further improve our method, we utilize multi-level output adaptation based on feature maps at different levels. Extensive experiments and ablation study are conducted under various domain adaptation settings, including synthetic-to-real and cross-city scenarios. We show that the proposed method performs favorably against the state-of-the-art methods in terms of accuracy and visual quality.

Publication Link: https://openaccess.thecvf.com/content_cvpr_2018/html/Tsai_Learning_to_Adapt_CVPR_2018_paper.html

Supplemental Publication Link: https://openaccess.thecvf.com/content_cvpr_2018/Supplemental/1435-supp.pdf